Process

I am a multi-discipline digital artist currently living in Brooklyn NY. The newest work here reflects my new interest in methods founded in Artificial Intelligence and Machine Learning for making art. I use a wide variety of AI tools, from a StyleGAN implementation running on the cloud using a "colab notebook", to running Stable Diffusion Deforum from a local install of Automatic1111. I also co-host a twitter space every weekday on AI Art tools and culture and am a featured speaker at NFT NYC on the intersection of AI Art and NFTs.

The earlier work here was done while living in Bangkok, Thailand and Southern California respectively. This work is what I call "Generative Photography". For this work I take photographs which I then process using custom software. The older works are based on photographs of Bangkok, Thailand, where I lived for 7 years up until 2019; the works in the Flora-Fauna section and the Rust section are mostly from the San Diego area, where I lived until 2021, although the very latest are from the East Coast since my return.

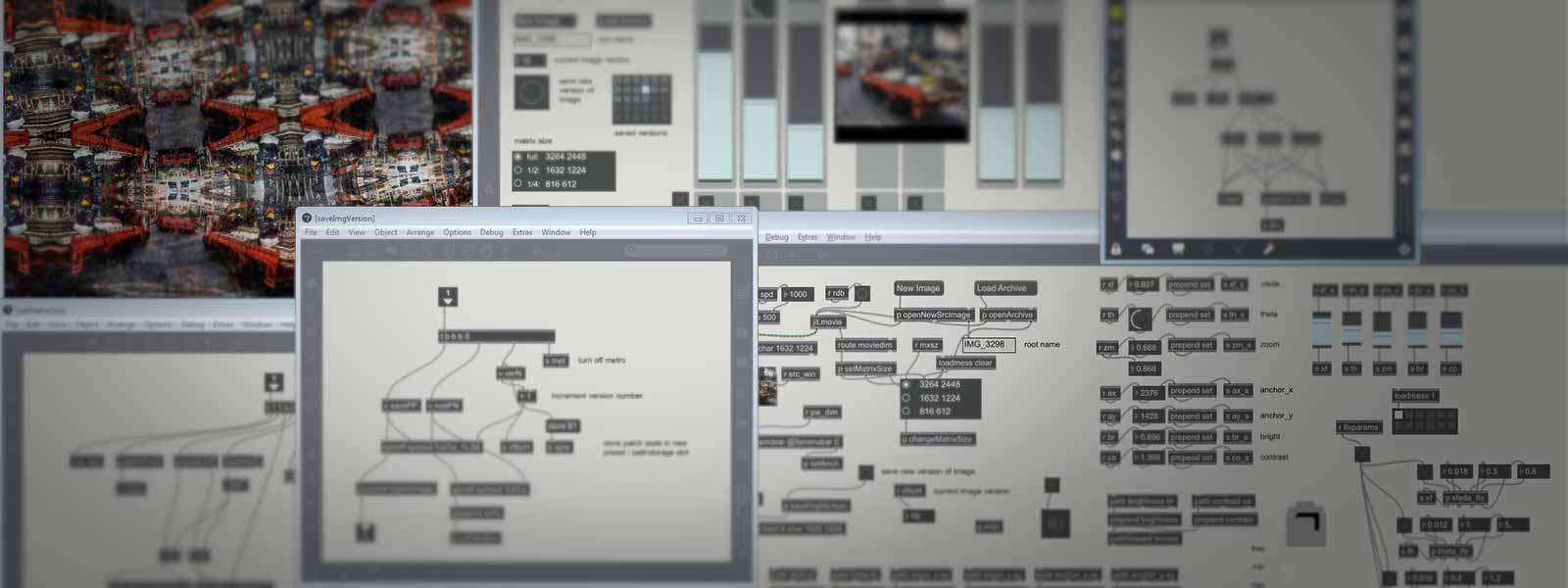

I process the photos I shoot using a "patcher" I wrote in Max/MSP/Jitter (a software development environment published by Cycling '74) which I have been constantly modifying and amending over several years. Max/MSP/Jitter is a visual programming environment where image data can be "patched" through various objects that modify the image in real-time. l. Basically the patcher takes an image, scales it, rotates it, and reflects portions to create kind of a rich kaleidoscopic effect and then feeds the output image back onto the input image in an iterative process. The iteration does something like “re-fold” parts of the image. I also use a lot of chance and randomization algorithms and keep working an image over and over again to see what I come up with.

-Robert Stratton aka madbutter

Contact info:

info@madbutter.com

Robert Stratton